Learning SaltStack: a Cloud Template for vRA

Following VMware's acquisition of SaltStack, learn more about Salt. Try this vRA cloud template to deploy a simple Salt environment.

Just over a week ago VMware completed its acquisition of SaltStack and we've welcomed this strategic technology into our Cloud Management journey. The folks that I've heard from already seem to share VMware's values and know their stuff. This, along with the technology and experience that they bring with them, makes me very excited for the future of vRA and Cloud Management and automation in general at VMware.

Of course, the fine details about how SaltStack will integrate and operate aren't public yet. However, if you're using vRA Cloud or vRA 8.x then at some point in the fuure then you're likely to encounter SaltStack. For that reason I'm making sure that I spend a little time raising my game with respect to Salt over the next few months. Wouldn't it be nice if I could deploy a small SaltStack environment to mess with?

One of my colleagues, Vincent Riccio, was very quick to assemble a vRA Cloud Template to do just that and he created a blog post to showcase it. Kudos to Vincent for that but I wanted (and needed) to make a couple of tweaks to suit my purposes.

Salt Communication

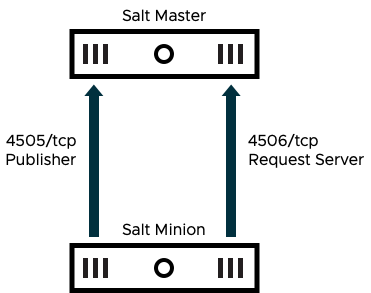

In a very simple setup you just need a Salt Master and one or more Salt Minions to get started with your learning journey. I ran in to a couple of minor challenges in my lab that I had to solve to get to this point though. They key one was enabling communication between the master and the minion.

Communications between minions and the master are initiated by the minions, meaning that network traffic is outbound from the minions to the master. No ports need to be opened on the minions but the master does need these ports opened. Their functions are:

- The Publisher port (4505) is used by all connected minions to listen for messages / instructions from the master. These messages are sent asyncronously to minions.

- The Request Server port (4506) is used for synchronous traffic between each minion and the master and carries results back from each minion to the master or is used by minions to request minion-specific data or files (known as Salt pillars) from the master.

The problem I had with the original Cloud Template was that my guest OSs have firewalls enabled so the master would need 4505 and 4506 opened. On lines 44 - 46 of the template immediately below you'll see where I've added this to the cloud-init commands executed on the Salt Master.

Other Minor Changes

If you perform a diff against the original template you'll notice a couple of other minor changes that I'll explain briefly.

- On lines 15 and 33 I updated the template to use my own image mapping, and switched from Ubuntu to CentOS.

- Similarly, on lines 16 and 34 I changed the flavor mapping to match my lab.

- In the cloudConfig section for the minion (lines 20 - 27) I added naming options for the guest OS so that name resolution would function correctly with my DNS. Additionally, when installing the minion, I have provided the hostname of the Salt Master instead of the IP.

- In the cloudConfig section for the master (lines 38 - 48) I also set the hostname for correct DNS function in my lab. I added the firewall commands mentioned earlier and stripped out the SSH configuration as that's built in to my CentOS image anyway.

- The tags I use are a little different so I had to update the constraints etc.

Just to explain the property hostname on lines 17 and 35, I set this property when I want make sure that the deployment resource is named for the hostname that the guest OS has. I have event subscription in vRA that takes this property and renames the resource with the value. Hence when the machine is deployed, it uses this name. I won't go in to too much detail about that process here, but the script for the action is below:

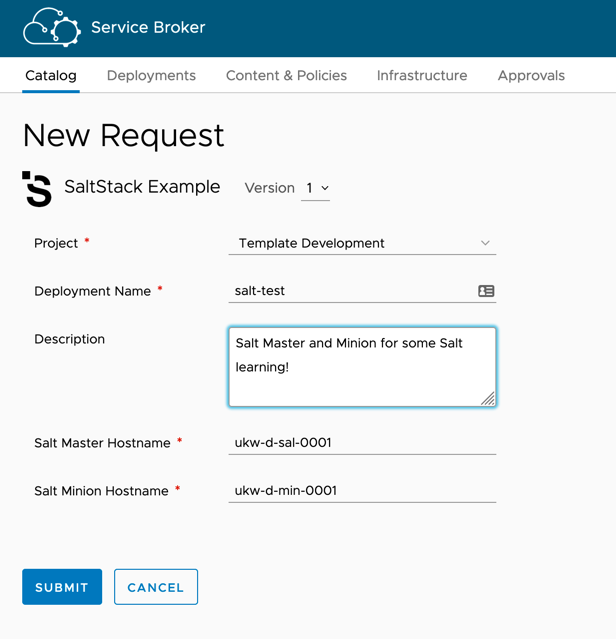

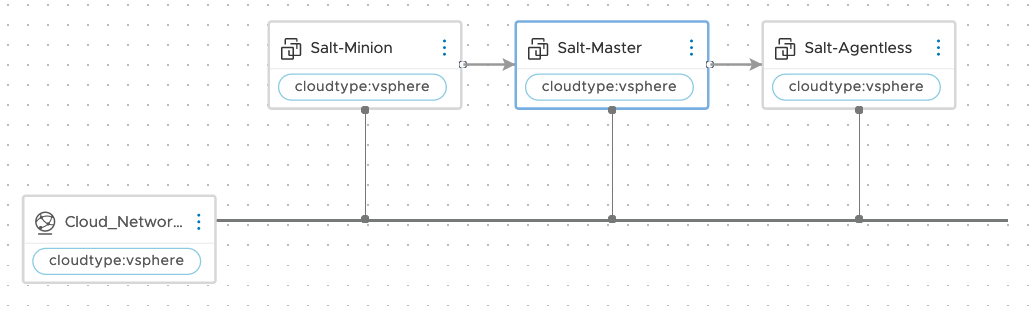

So, now I have a cloud template that deploys a master and a minion that I can play with, it's time to publish it and apply a nice icon (sourced from SaltStack's branding page). And test it all of course!

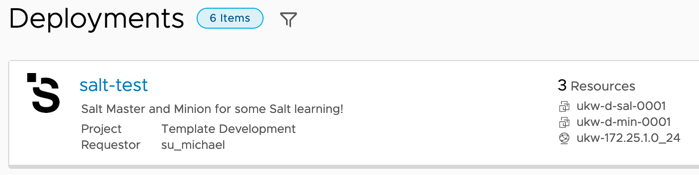

Fast-foward a few minutes and I have a deployment that I can use to get more familiar with SaltStack.

Agentless Option

This is all well and good, but what if you don't want to install a minion on every host that you want to use Salt with? It's covered.

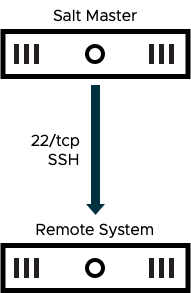

SSH can be used in place of the conventional minion software to manage a remote host. For this to work the Salt Master must be able to establish an SSH session to the remote system and all traffic runs across that connection when it established. Configuration of the remote systems is fairly minimal. There are, however, two requirements.

- Salt requires python3 to be installed on the remote system.

- The Salt Master needs some form of credentials to authenticate via SSH.

All of this is simple enough to manage in vRA. The installation of a package and the configuration of SSH etc is easily accomplished via cloud-init.

Roster File

For the Salt Master to know about the remote system, a roster file is needed on the master. Also, the salt-ssh package is needed on the master. This latter item can be achieved with a simple dnf command:

dnf install -y salt-ssh

Creating the roster file is also fairly straightforward, again through cloud-init using a write_files directive. All we need to provide is a path and some content:

The format of the roster file is simple enough, we simply need an ID (I'm using the remote system's hostname) and an address and credentials. There are lots of other options possible, I'm just going with the simplest for now. Of course, for this to work the Salt Master is dependent on the remote system in my cloud template. It makes it look like this:

And here's the final version of the cloud template:

Have fun, and learn Salt!

SaltConf20

Want to know more about Salt? There's lots of info on SaltStack's docs site, but you should consider registering for SaltConf.