Custom Naming in vRealize Automation 8.x (Pt. 2)

Having created a mechanism to generate unique hostnames previously, this article follows on with how to integrate the functionality with vRA 8.x.

In the previous article I covered the inspiration for this from Mark Brookfield's post on Custom Naming in vRealize Automation 7.x. I also touched on the built-in mechanisms for workload naming in vRA 8.x and then moved on to my independent host-naming solution for both vRA 8.x and vRA 7.x.

In this part I'll cover off how to extend vRA 8.x to make use of my naming solution.

Extending vRA 8.x to Request Hostnames

Now that unique hostnames can be generated on our standalone vRO instance, we need to consume them from vRA 8.x. There are two things we need to configure here: the first is a workflow that constructs the request to send to the remote Orchestrator instance and handles the returned name(s); the second is an event subscription to trigger the workflow.

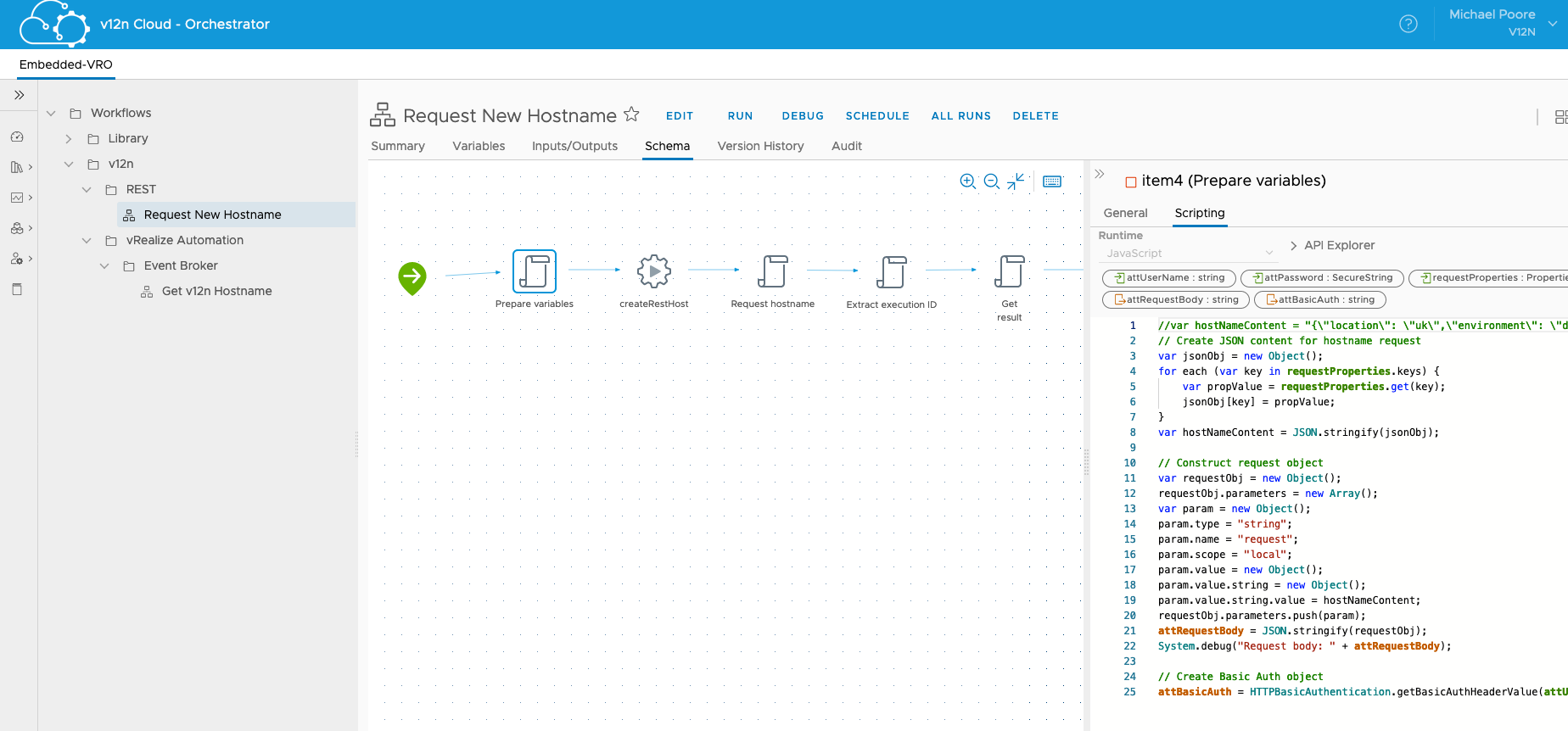

Local vRO Workflow for vRA 8.x

This workflow will be located in the embedded vRO instance that ships with vRA 8.x. Its purpose is to be triggered automatically and pickup the necessary values to pass on to the remote vRO and update the deployment resources with the generated hostnames.

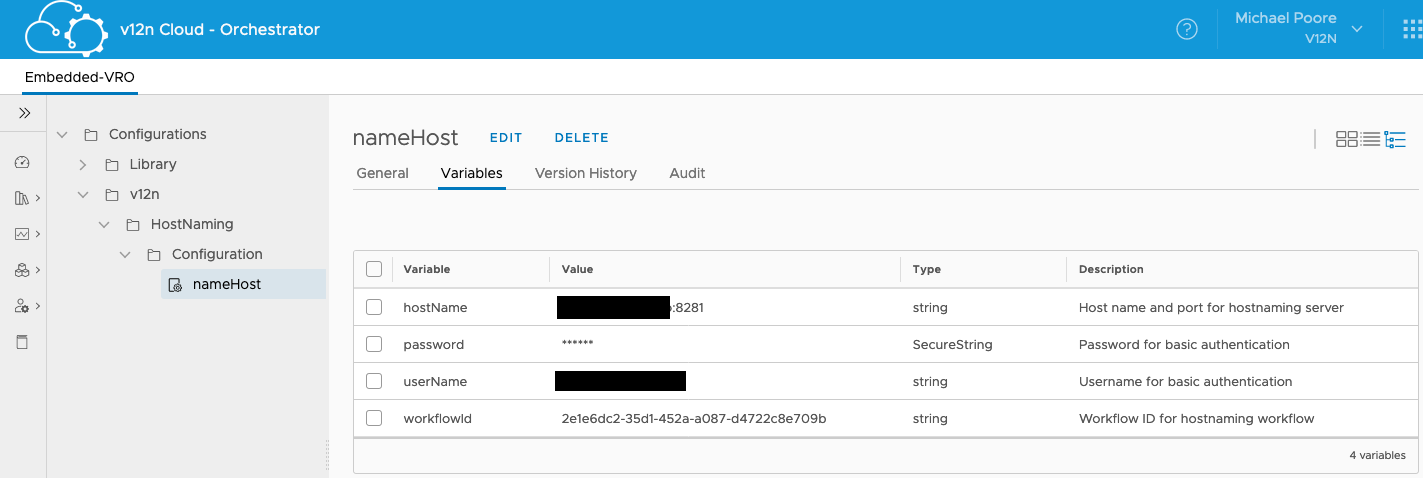

Our first step is to create a Configuration Element with a few needed pieces of configuration:

In case it's not obvious, the hostname:port should be the remote vRO instance and the username I've hidden because that's not the sort of thing I wanted anyone to know!

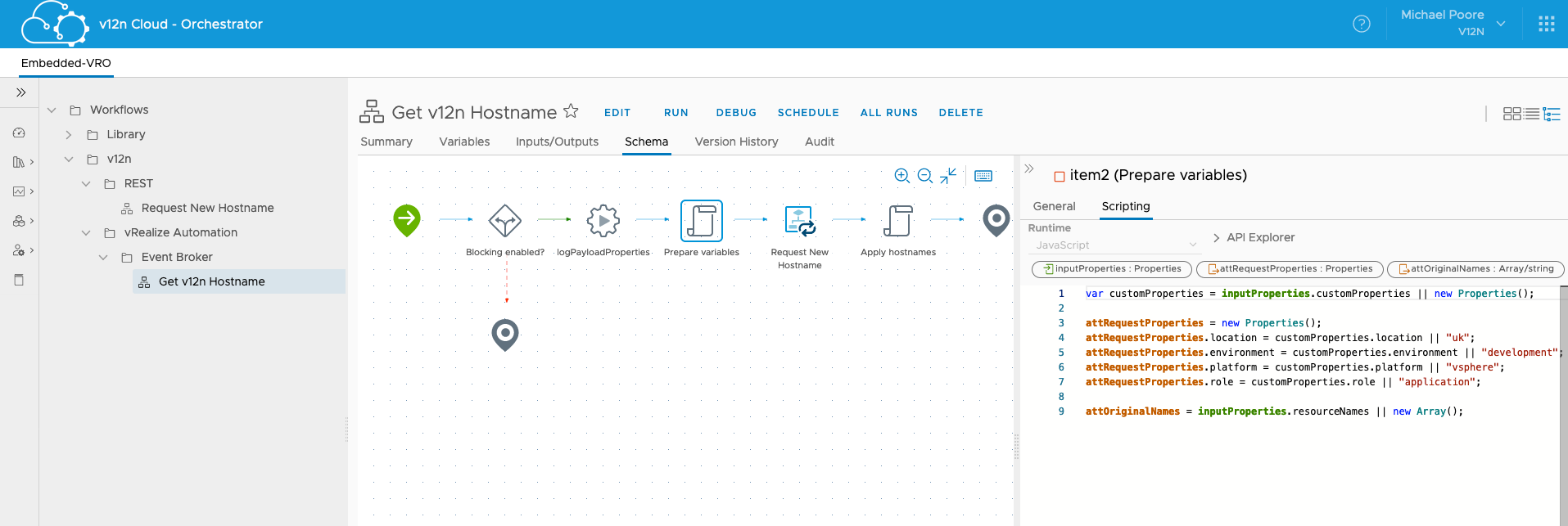

Next up we need a couple of workflows. The first of those is the one that will be triggered by the event subscription. It needs a single input called "inputProperties" of type Properties. This will be populated automatically by vRA. If you want to learn about what comes across in this object, my "logPayloadProperties" action helps greatly:

What we're after here is extracting the custom properties from the requested resource and putting them in to the onward request. I'll highlight where they are set in the blueprint later.

After doing this another workflow is called for each of the resources in the subscription payload. If we have just a single machine, it's just a single execution. If we have 2, 3, 4 etc instances then we need multiple unique names.

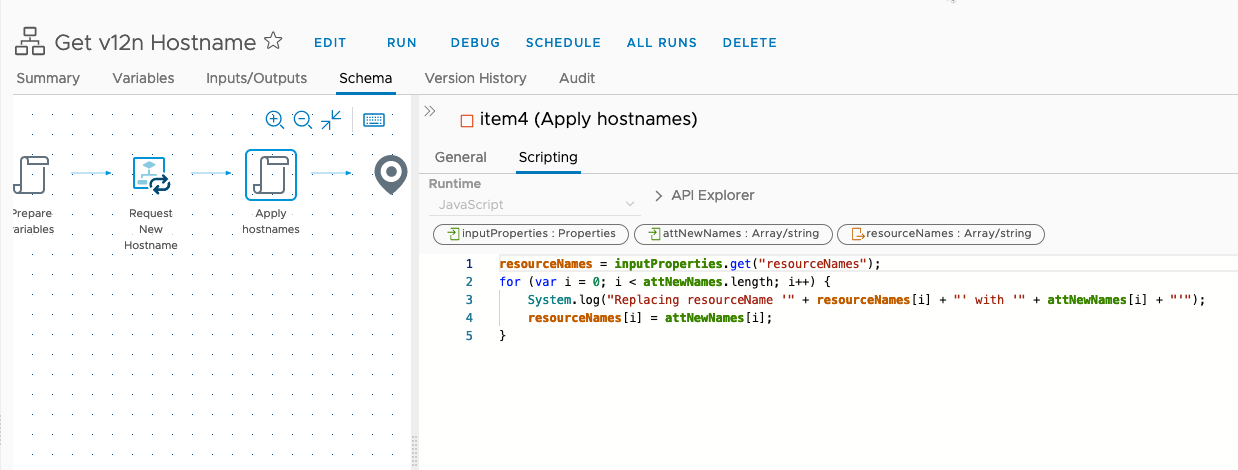

After the the nested workflow executes, we take the output from the run(s) and replace the resource names:

This will hopefully make more sense when we look at the subscription.

As for that nested workflow, that's the bit that reaches out to the remote Orchestrator to execute the naming workflow there.

The basic process followed is:

- Create a JSON object of the properties that we want to place in the request

- Create a Basic Auth header for the REST request to vRO

- Prepare the REST connection to vRO

- Execute the REST call to start the remote workflow

- Extract the execution ID

- Check the remote execution to make sure that the workflow completes successfully

- Extract the returned hostname and set it as the output

I'll package all of this up soon and make it available. It's easier to see than it is to describe!

vRA 8.x Event Subscription

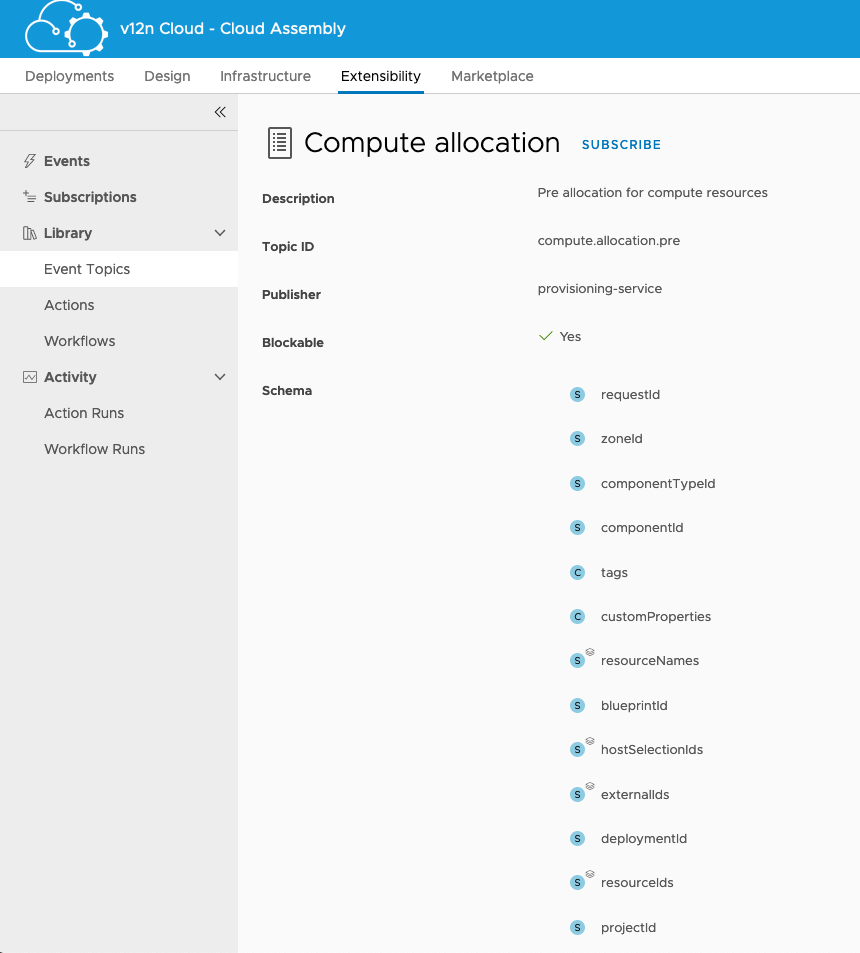

Now that we have our workflow in place, it's time to create the subscription that will trigger it. In Cloud Assembly the Extensibility tab is used to access details about subscriptions.

Looking at the Event Topics, we can drill down and find details out about the "Compute Allocation" topic. This is the topic we need.

For our purposes, the two things we need are "customProperties" and "resourceNames". The former contains all of the properties for the resource that triggers this subscription. The latter initially contains the automatically generated names of the resources. The workflows above work in tandem to overwrite those names.

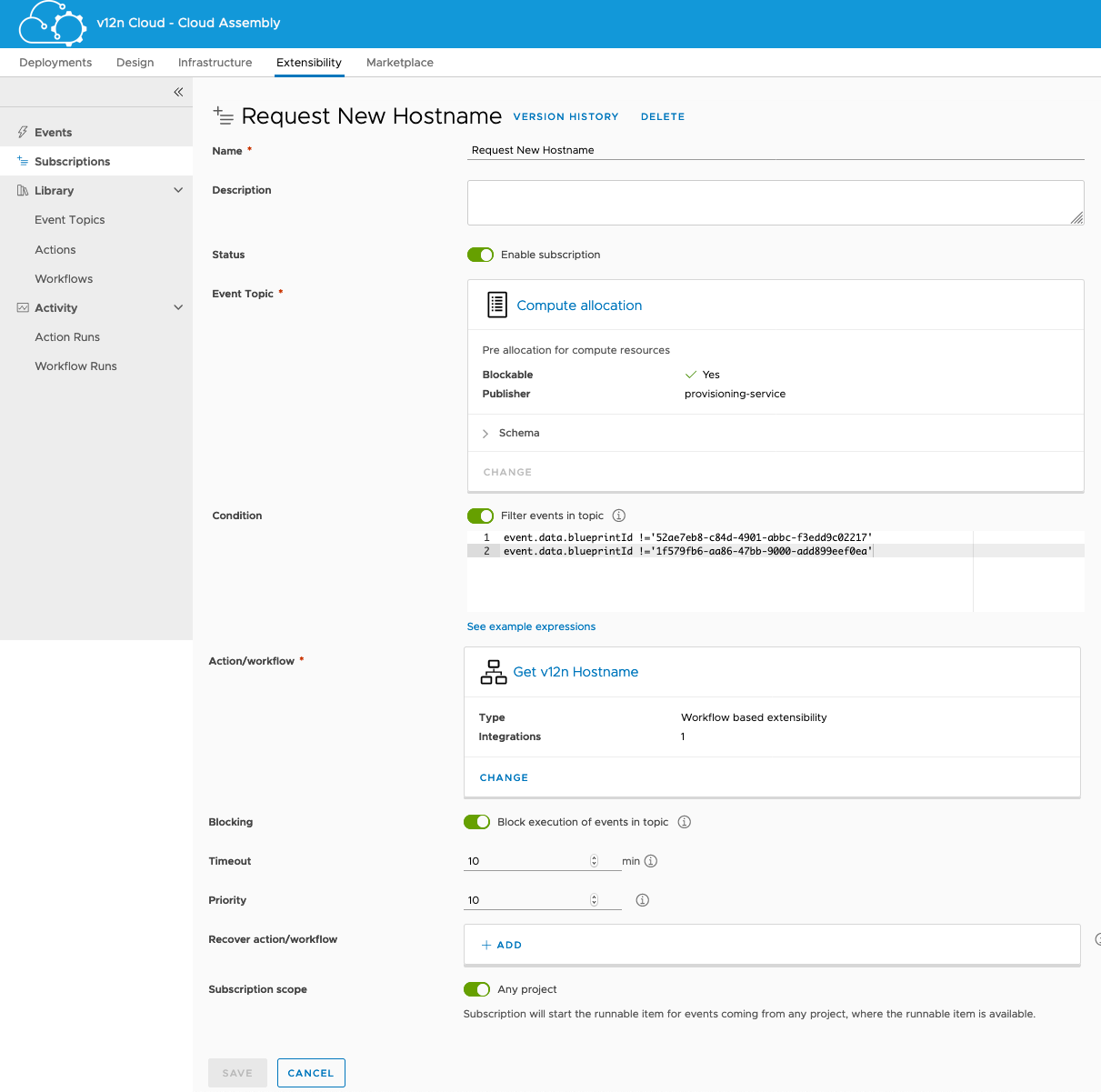

To subscribe to this topic, you can either click the Subscribe link or add a subscription from the Subscriptions pane using the menu on the left. Either way, what we want is to configure a subscription that looks a bit like this:

- Name - Give it a name, something meaningful

- Status - We want this enabled

- Event Topic - This needs to be Compute Allocation

- Condition - Now, this is up to the individual. I have specifically excluded two blueprints from triggering this subscription for my own reasons. Ideally I wouldn't have any listed here.

- Action / Workflow - This is the workflow that we want to trigger.

- Blocking - This must be enabled or vRA won't wait for the names to come back.

- Timeout - Left at default.

- Priority - Left at default.

- Recover Action / Workflow - Not used.

- Subscription Scope - I have activated it for all Projects. This saves me having to configure the functionality for each individual Project.

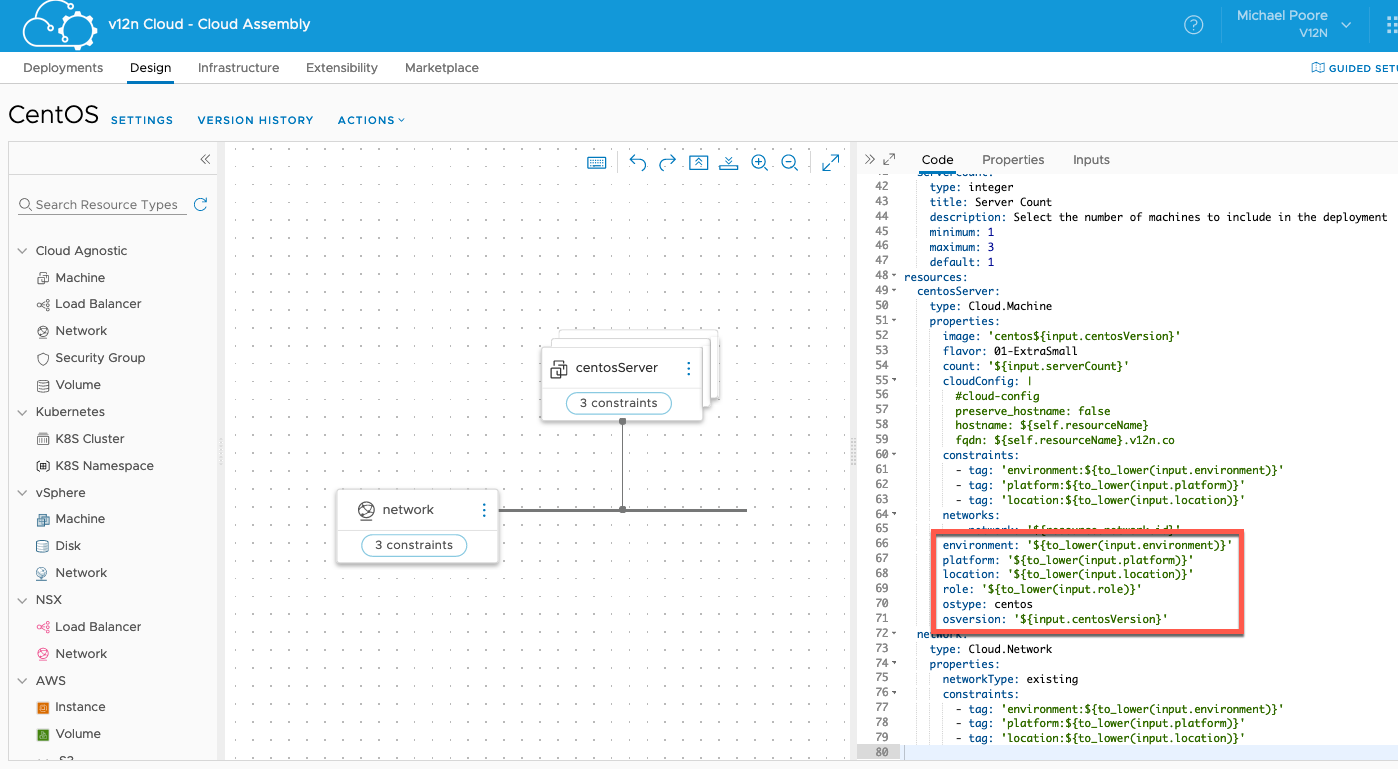

So now there is a subscription, we just need to add properties to the blueprints so that they can be picked up. For example, let's look at my CentOS blueprint:

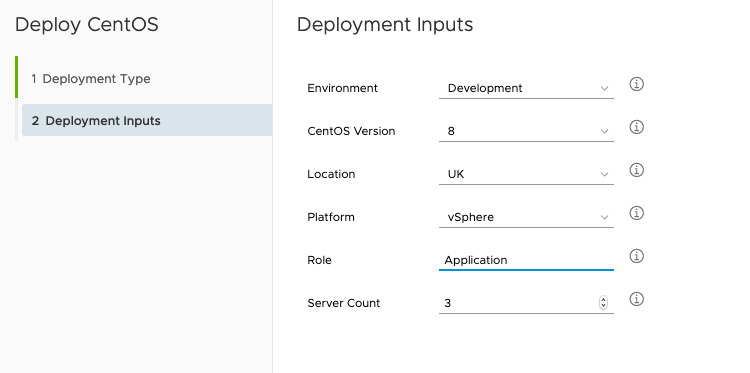

I've highlighted the properties that I'm adding to the centosServer resources. Hopefully you can see how they marry up to the naming elements picked up and used downstream. In the above example the values are being selected by the requestor at request time. You could easily hard-code these in to the blueprint though.

Does it work? Why yes! Let's make a request...

Above, I'm requesting a new deployment from my blueprint. I've left the defaults for the most part except for specifying 3 servers instead of the default (1).

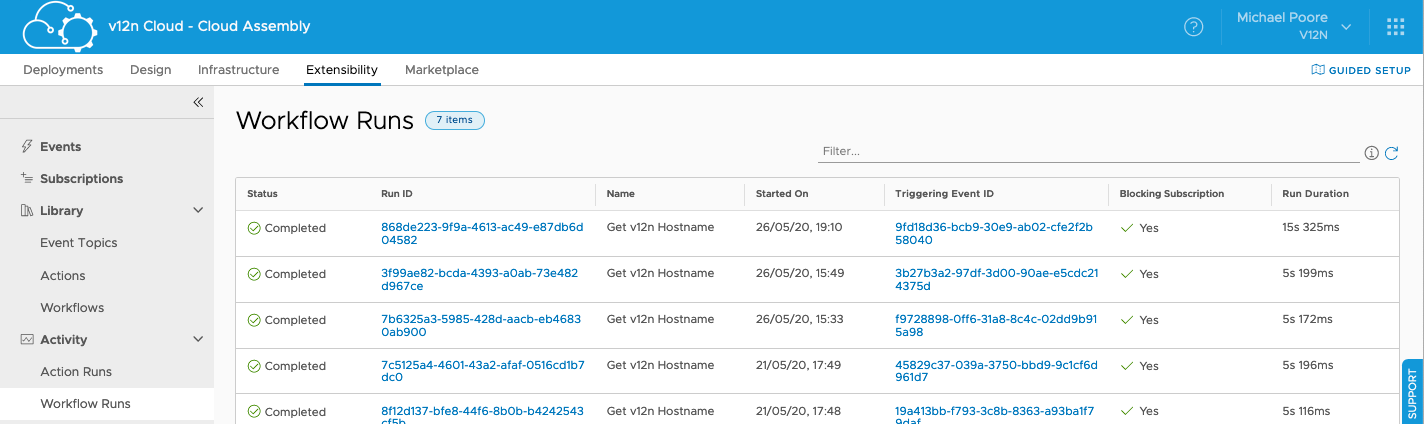

Looking at the Workflow Runs in Cloud Assembly, you can see that the subscription has triggered.

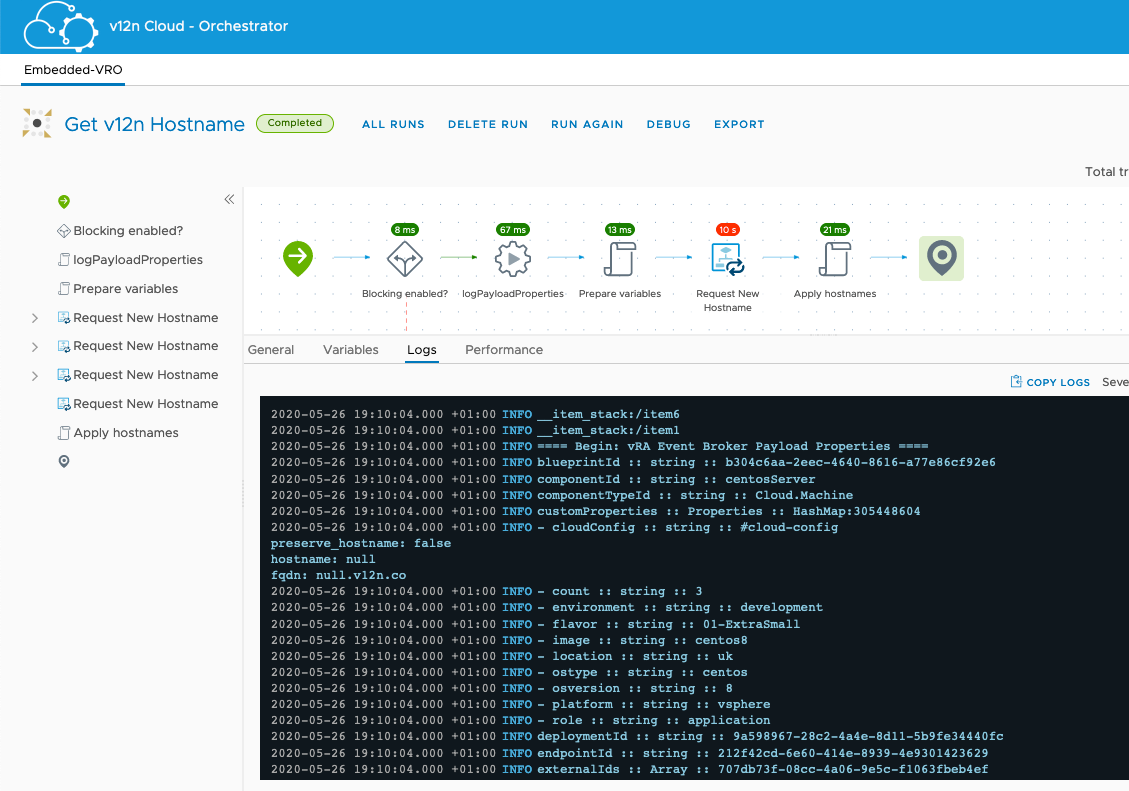

Moving swiftly across to Orchestrator, you can view the logs from the workflow run and see "logPayloadProperties" log out the properties that it received.

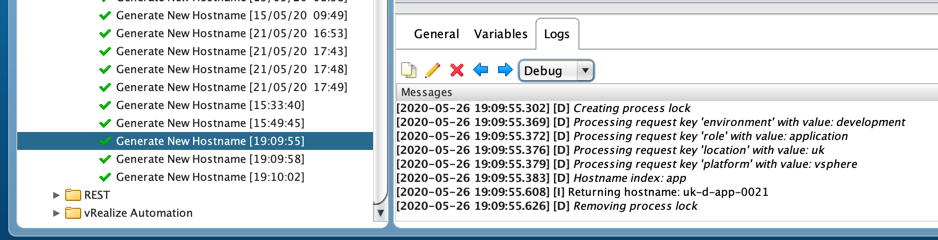

Next stop, the remote Orchestrator. There are three executions, one for each of the servers in the request.

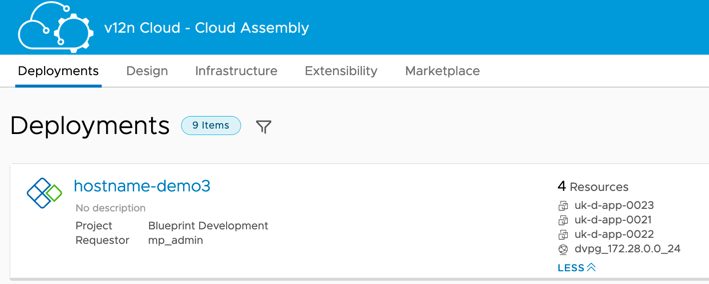

Finally, there's my deployment completed. Three servers with appropriate names. And, because the template has cloud-init enabled and the blueprint uses it, the hostnames in the guest OSs match up to their names in vCenter.

Summary

So there you have it. It works and it does the job that I want it to do. The eagle-eyed out there will of course have spotted a flaw or two. When the number associated with a role gets up to 9900 or so then I'll need to work out how to handle rolling over the number.

There are probably plenty of ways that I can improve this, and I will, but for now that's it.